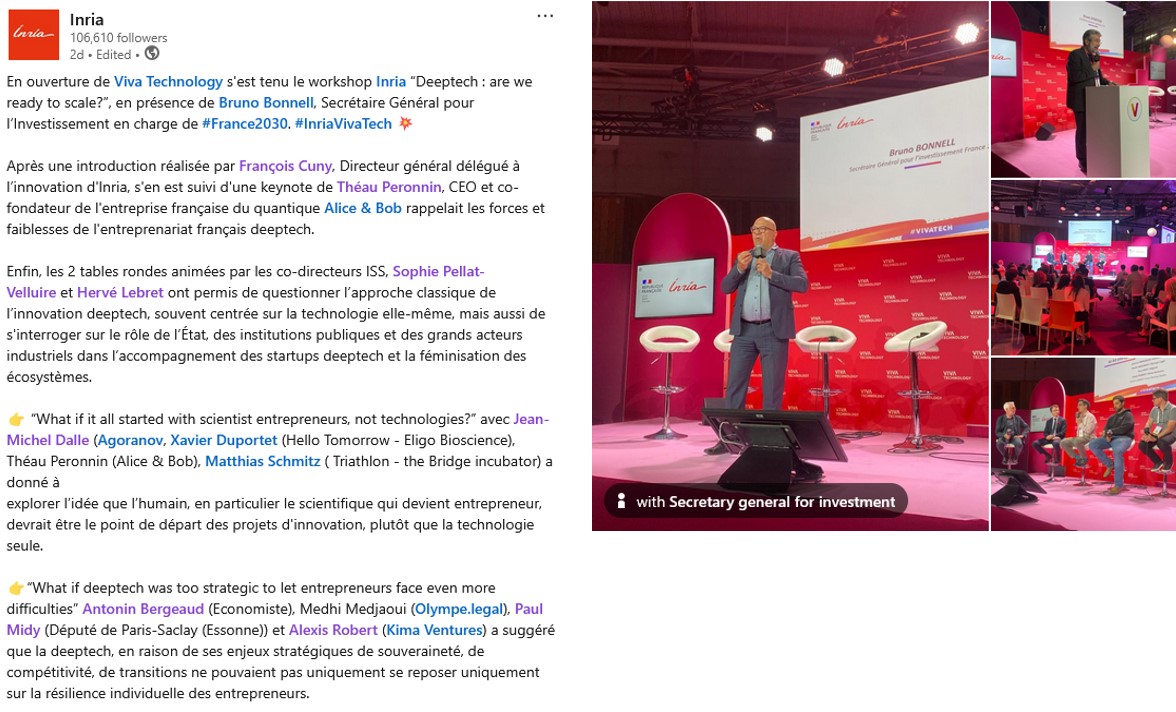

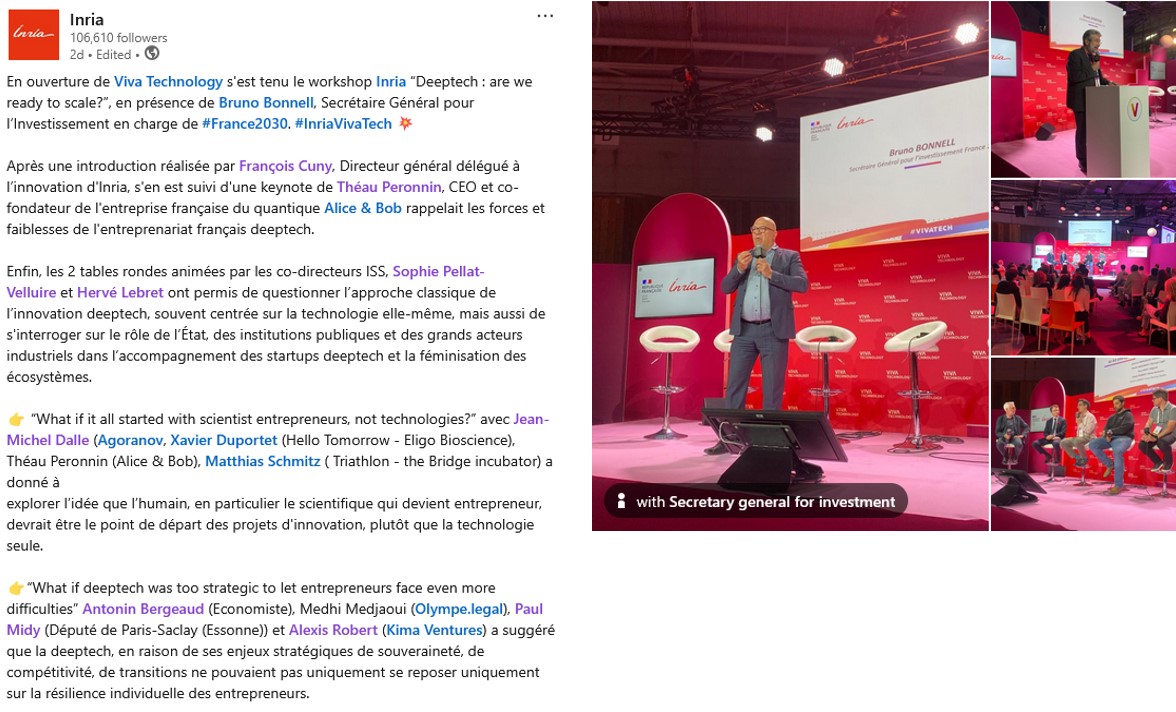

During the Vivatech trade show, Inria organized a workshop on the topic “Deeptech: Are we ready to scale?”

The discussions were rich, in-depth, and fascinating. I’m obviously biased since I was a co-organizer, but I’ve rarely had such a pleasure discussing the topic. So, I’ll provide a subjective summary, adding my own comments on various topics that are dear to my heart. [They will be in brackets and italics.]

What is Deeptech?

This was the starting point for Théau Peronnin, founder and CEO of Alice & Bob. “Above all, it’s a technology that has two fundamental attributes: the first is it has very deep roots in science. If you can understand this technology straight out of business school, it’s perhaps because it’s not quite deeptech yet. […] Its second attribute is the ability to create companies, players, or products that will have a strategic impact on the economy.”

[During another roundtable, I heard that the term appeared when the internet, B2B/B2C, and SaaS had diluted the technology into the excesses of “pet.com”, but that fundamentally, the high-tech of the 1980s and the deeptech of the 2010s are two sides of the same coin. I would add that if something is patentable, it’s undoubtedly deeptech.]

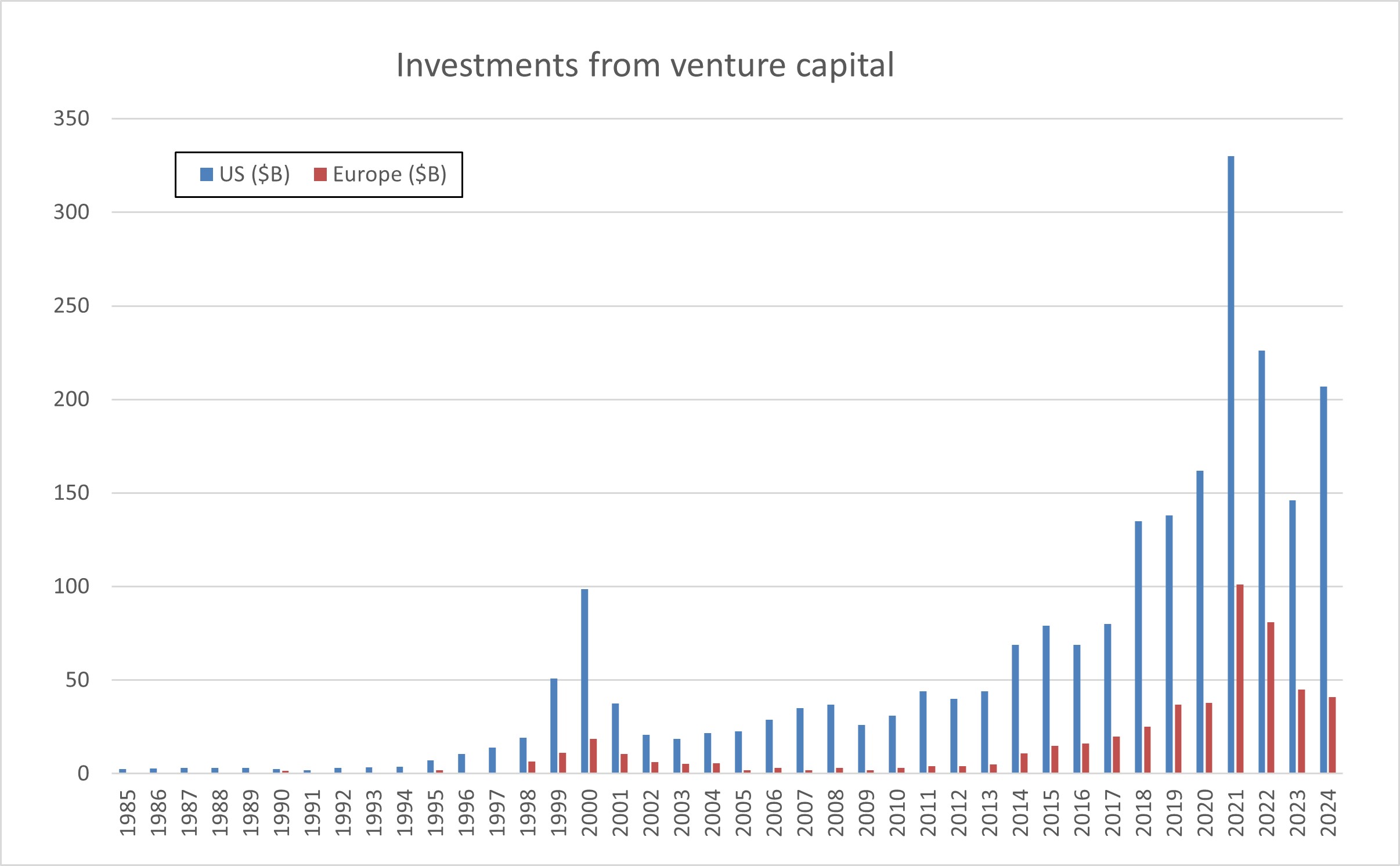

Théau Peronnin then gave his perspective on the challenges facing the French ecosystem. “I can tell you very simply: in France, we are extremely strong on the initial opportunity, we have incredible talent. We are still tied for first place in the number of Fields medals with the Americans, even though we are five times fewer in number.” […] “And then we have an early-stage venture capital ecosystem that has managed to establish itself in recent years, perhaps even a little too much; we could finally say that it is slightly saturated.” […] “The weaknesses are really in the later stages of a deeptech’s life. We have a subject that is perfectly known but which is far from being cracked, which is that of financing the so-called growth stages, this moment when companies like Alice and Bob will seek to raise capital of several tens of millions of euros, several hundreds of millions of euros to continue this R&D race at the international level.” [This is a subject that will be addressed later and I am not sure that it is the main subject, but the debate undoubtedly exists. See below!] “There are no suitable European players, which creates an anticipation effect across the entire value chain and there is a certain reluctance among funds to really deploy this capital with intensity and audacity in deeptech.” […] “Silicon Valley takes its name from the deeptech of the 70s, 80s, 90s in silicon, which created generations of fortunes of individuals with a very strong appetite for this deeptech and who therefore subsequently directed their capital towards these investment funds which continue to invest in this field. In Europe, there are no such fortunes, they were made elsewhere, they are in other fields and therefore we do not yet have these good products, hence the important role of the State in priming the pump.”

“One last point to introduce the round table on the human factor, which is the relationship to risk-taking. France has a school in any case, a view of studies and the academic world very focused on excellence which is perhaps the downside, or rather the cliché, of saying that we have a certain fear of failure and this is seen in my opinion in certain systems which deserve to be rethought, notably that of the Pacte law for the part-time activity of researchers and there it is a very personal opinion that I wish to share with you which is that of saying that there is no entrepreneurship without risk-taking. You have to get your hands dirty, you have to put your career on the line somewhere, you have to have “skin in the game” as the Anglo-Saxons say and perhaps in this system there is therefore in this part-time activity, a comfort in knowing that you are still well protected within your research organization while trying to enjoy the pleasure of entrepreneurship. In my opinion, we have to go all out and that means being able to come back to the academic world after a startup failure and so perhaps the lever to allow more audacity is to make the academic world more attractive for profiles with hybrid careers that have gone through the world of entrepreneurship. That’s to launch this round table on the theme of the human factor, these men and women who are making the entrepreneurs of tomorrow.”

Deeptech is, above all, about bipeds!

Théau Peronnin returned to the subject of humans through a real problem: “A very difficult issue we have is that of parity, gender diversity, which is horribly difficult to crack because we arrive at the very end of the food chain for training these profiles. Above all, technical profiles, a lot of self-centeredness regarding the fact of going through the Grandes Ecoles with all the sociocultural bias there is in these Grandes Ecoles, and even with international diversity, we must have between 20 and 30 nationalities, 30% of non-French speakers in the team, so this gives you a little demography.”

Xavier Duportet amplifies this human aspect: “We have people who are a little crazy because to get started in deeptech [where] less than 2% of projects reach a mature product on the market […] to get started you have to be a little crazy, you have to be naive too, I think, and you have to think that what is impossible can become possible. There are lots of things we don’t know and so the unknown is part of our daily work.” […] “The most important thing for us is not necessarily experience, it’s above all curiosity and that people are enterprising because in deeptech you can’t just apply the principles, apply the things you’ve already learned, you always have to be ready to face failure almost every day and so you need people who are willing to question themselves and who deep down are truly enterprising people.”

Jean-Michel Dalle: “There are motivated bipeds who come to talk to us about the microbiome, or motivated bipeds who come to talk to us about quantum computers. Anyone who doesn’t see things from that perspective, that is, from the bipeds’ perspective, is missing something. Of course, we’re going to check that the quantum computer project isn’t just anything, that they didn’t invent it on a Sunday morning after the market. But if we don’t look at it through the eyes of the founders, in my opinion, we need to change careers.”

Théau Peronnin: “The real issue is that the researcher’s passion is to understand, but the problem with that is that we reap the rewards of the pleasure of our work very early in the product’s life. I understood what I had to break to bring this machine to market, but unfortunately, we only did 5 or 10% of the work to truly deliver the system.” Behind this, we need to strengthen, produce, distribute, reposition. There is a whole issue: how do we learn to take pleasure not in having understood but in making the other person understand, but even more than that, in making the other person adopt what we have cracked, and that is a muscle to develop which is quite different.

Xavier Duportet: “It’s not a technology, it’s not a science that’s going to change the world or save the world, but a product, and that’s often where it falls short. We still see a lot of researchers who only think about science, only technology, and who can’t make the switch in their minds, saying, ‘How come I’m not selling my science, but I’m making people dream, I’m making these serious people [investors] dream, that I’m going to be able to be that person who will transform science into a product that will generate added value for society, but above all, for investors.'”

Marie Paindavoine: “I was lucky at the beginning to be supported in entrepreneurship by structures from the academic world, first by INRIA and then by the University of Berkeley in the United States, which has an acceleration program, and it’s true that thanks to them, it allowed me to learn how to transform this scientific discourse into an entrepreneurial discourse; and moreover, at the acceleration program at the University of Berkeley, for six months, we just repeat the company pitch and learn how to convince, in fact, because ultimately, and they tell us, ultimately, you’ve done the hardest part, you have great technology, you’ve managed to write a thesis in cryptography, well, you’ve done the hardest part, Marie, now learning marketing will take you two months, but you have to get down to it for two months, and that’s where we need to surround ourselves, to have this ecosystem that allows people to train because, after all, if we’ve managed to do a doctorate, we’re able to continue to train in the profession of entrepreneurship, but we need to find those people who can see not the value of scientific technology as it can be presented today, but a sort of projected value of this technology.”

Xavier Duportet: “On people and the network and the ecosystem, I also had the chance to do a thesis between INRIA and MIT. At first I wanted to be a researcher and when I arrived at MIT, I saw all these people who were starting up, these professors who were becoming entrepreneurs, that’s when I understood I was inspired by this generation of entrepreneurial researchers saying to myself but in fact if we really want to change the world it’s not research it’s entrepreneurship and today in the US, there is Silicon Valley, it was created 20 years ago, 30 years ago as Théau said there is this whole generation now, not grandpas but slightly older people who have succeeded and so in fact there is a “network effect” in the US which is super important, it’s the generation of entrepreneurs who have already succeeded who are there to help and pass on they did it they made mistakes and they really serve as mentors and that’s where we have a pretty interesting opportunity, and in France we can’t want to put the cart before the horse, we have what the BPI has done, all the research institutes, the change that has been underway for 10 years, we are starting to have these companies which are becoming leaders on the international level.”

Matthias Schmitz: “What we have started to do recently is to invest in an entrepreneurial mindset much earlier in the education of our students so we are trying to roll out programs where we bring entrepreneurship into all the faculties. For example, the university of Saarland is investing €1.5M every year with the goal that every single student that we have, whether it is a business student, whether it is a Romanistic student or an engineering student has at least one time during his studies thought whether entrepreneurship can be a career option for himself and by doing that I think we try to solve the problem a little bit earlier, bring the mindset in the heads of the people and not having to have people jump into the too cold water at the moment where they are already at the PhD level.”

Marie Paindavoine on being a female entrepreneur: “do you want the version we hear in France or the United States? both! So we don’t hear the same thing in France and in the United States. In France, I was immediately asked if I intended to partner with “un” CEO, emphasizing the “un”. I was already asked after my presentation, well, anyway, I’m not going to do them all actually because it’s of no interest, but there is indeed a halo of suspicion, let’s put it like that. In the United States, then I’m not saying that they are better than in France because I arrived at the University of Berkeley, at the University of Berkeley accelerator, 2,000 applications, 30 start-ups selected, 2 women CEOs, so they are not much better. On the other hand, once we reach this level of selection, when I say I’m starting a business with children, people tend to congratulate me on the level of energy it requires instead of asking me how I’m going to arrange childcare and if my husband agrees with me starting a business with my children, something I’ve already been asked in France.

I’ll stop here and do a new post about the second roundtable!