January 7, 2015 – November 13, 2015

Category Archives: Must watch or read

A nice way to finish the year!

It seems global warming is around. But there are creative people like Candide Thovex who offer their own solutions. Very different from my 2010 post but enjoy the holidays!

The business of biotech – Part 3: Genentech

I should have begun with Genentech this series of posts about Biotech (see part 1: Amgen or part 2 about more general stats). Genentech was not the first biotech start-up, it was Cetus, but Genentech was really the one which launched and defined this industry. All this really began with the Cohen Boyer collaboration. Genentech would have loved to get an exclusive license on their patent about recombinant DNA, but the universities could not agree for business as well as political reasons. Genentech was an unknown little start-up and genetic engineering a very sensitive topic at the time. Swanson had tried even to offer shares to Stanford and UCSF (the equivalent of 5% of the existing shares at the time).

Please note I already wrote about Genentech here in Bob Swanson & Herbert Boyer: Genentech. But this new post follows my reading of Genentech – The Beginnings of Biotech by Sally Smith Hughes.

Chronology

– November 1972 – Meeting of Cohen and Boyer at aconference in Hawaii

– March 1973: First joint lab. experiments

– November 1973: Scientific publication

– November 4, 1974: Patent filing

– May 1975: Cohen becomes an advisor for Cetus

– January 1976: Meeting between Swanson and Boyer

– April 7, 1976: Genentech foundation

– August 1878: first insulin produced

– Q2 1979: 4 research projects with Hoffmann – La Roche (interferon), Monsanto (animal growth hormone), Institut Mérieux (hepatitis B vaccine) and an internal one (thymosin).

– July 1979: first human growth hormone

– October 1982: FDA approval of Genentech insulin produced

– October 1985: FDA approval of human growth hormone

I have to admit I had never heard of the Bancroft Library’s website (http://bancroft.berkeley.edu/ROHO/projects/biosci) for the Program in Bioscience and Biotechnology Studies, “which centerpiece is a continually expanding oral history collection on bioscience and biotechnology [with ] in-depth, fully searchable interviews with basic biological scientists from numerous disciplines; with scientists, executives, attorneys, and others from the biotechnology industry.”

The invention of new research and business practices over a very short period

Swanson was captivated: “This idea [of genetic engineering] is absolutely fantastic; it is revolutionary; it will change the world; it’s the most important thing I have ever heard.” [… But Swanson was nearly alone.] “Cetus was not alone in its hesitation regarding the industrial application of recombinant DNA technology. Pharmaceutical and chemical corporations, conservative institutions at heart, also had reservations.” [Page 32] “Whatever practical applications I could see for recombinant DNA… were five to ten years away, and, therefore, there was no rush to get started, from a scientific point of view.” [Page 32] “I always maintain” Boyer reminisced, “that the best attribute we had was our naïveté… I think if we had known about all the problems we were going to encounter, we would have thought twice about starting… Naïveté was the extra added ingredient in biotechnology.” [Page 36]

The book shows the importance of scientific collaborations. Not just Boyer at UCSF but for example with a hospital in Los Angeles. A license was signed with City of Hope Hospital with a 2% royalty on sales on products based on the licensed technology. “[…] negotiated an agreement between Genentech and City of Hope that gave Genentech exclusive ownership of any and all patents based on the work and paid the medical center a 2 percent royalty on sales of products arising from the research.” [Page 57]

Even if in 2000, City of Hope had received $285M in royalties, it was not happy with the outcome. After many trials, the California Supreme Court in 2008 awarded another $300M to City of Hope. So the book shows that these collaborations gave also much legal litigation. [Page 58]

In a few years, Genentech could synthesize somatostatin, insulin, human growth hormone and interferon. It is fascinating to read how intense, uncertain, stressful these years were for Swanson, Perkins, Boyer and the small group of Genentech employees and academic partners (Goeddel, Kleid, Heyneker, Seeburg, Riggs, Itakura, Crea), in part because of the emerging competition from other start-ups (Biogen, Chiron) and academic labs (Harvard, UCSF).

“On August 25, 1978 – four days after Goeddel’s insulin chain-joining feat – the two parties signed a multimillion-dollar, twenty-year research and development agreement. For an upfront licensing fee of $500,000, Lilly got what it wanted: exclusive worldwide rights to manufacture and market human insulin using Genentech’s technology. Genentech was to receive 6 percent royalties and City of Hope 2 percent royalties on product sales.” [Page 94] They managed to negotiate a contractual condition limiting Lilly’s use of Genentech’s engineered bacteria to the manufacture of recombinant insulin alone. The technology would remain Genentech’s property, or so they expected. As it turned out, the contract, and that clause in particular, became a basis for a prolonged litigation. In 1990, the courts awarded Genentech over $150 million in a decision determining that Lilly had violated the 1978 contract by using a component of Genentech’s insulin technology in making its own human growth hormone product. [Page 95] Perkins believed that the 8 percent royalty rate was unusually high, at a time when royalties on pharmaceutical products were along the lines of 3 or 4 percent. “It was kind of exorbitant royalty, but we agreed anyway – Lilly was anxious to be first (with human insulin)” […]The big company – small company template that Genentech and Lilly promulgated in molecular biology would become a prominent organizational form in a coming biotechnology industry. [Page 97]

The invention of a new culture

Young as Swanson was, he kept everyone focused on product-oriented research. He continued to have scant tolerance for spending time, effort, and money on research not tied directly to producing marketable products. “We were interested in making something usable that you could turn into a drug, inject in humans, take to clinical trials.” A few year before his premature death, Swanson remarked, “I think one of the things I did best in those days was to keep us very focused on making a product.“ His goal-directed management style differed markedly from that of Genentech’s close competitors. [Page 129]

But at the same time Boyer would guarantee a high quality research level by encouraging employees to write the best possible scientific articles. This guaranteed the reputation of Genentech in the academic world.

A culture was taking shape at Genentech that had no exact counterpart in industry or academia. The high-tech firms in Silicon Valley and along Route 128 in Massachusetts shared its emphasis on innovation, fast-moving research, and intellectual property creation and protection. But the electronics and computer industries, and every other industrial sector for that matter, lacked the close, significant, and sustained ties with university research that Genentech drew upon from the start and that continue to define the biotechnology industry of today. Virtually every element in the company’s research endeavor – from its scientists to its intellectual and technological foundations – had originated in decade upon decade of accumulated basic-science knowledge generated in academic labs. […] At Boyer’s insistence, the scientists were encouraged to publish and engage in the wide community of science. [Page 131]

But academic values had to accommodate corporate realities: at Swanson’s insistence, research was to lead to strong patents, marketable products, and profit. Genentech’s culture was in short a hybrid of academic values brought in line with commercial objectives and practices. [Page 132]

Swanson was the supportive but insistent slave driver, urging on employees beyond their perceived limits: “Bob wanted everything. He would say, If you don’t have more things on your plate than you can accomplish, then you’re not trying hard enough. He wanted you to have a large enough list that you couldn’t possibly get everything done, and yet he wanted you to try.” […] Fledging start-ups pitted against pharmaceutical giants could compete mainly by being more innovative, aggressive, and fleet of foot. Early Genentech had those attributes in spades. Swanson expected – demanded – a lot of everyone. His attitude was as Roberto Crea recalled: “Go get it; be there first; we have to beat everybody else… We were small, undercapitalized, and relatively unknown to the world. We had to perform better than anybody else to gain legitimacy in the new industry. Once we did, we wanted to maintain leadership.” […] As Perkins said “Bob would never be accused of lacking a sense of urgency. “ […] Even Ullrich, despite European discomfort with raucous American behavior, admitted to being seduced by Genentech’s unswervingly committed, can-do culture. [Page 133]

New exit strategies

Initially Kleiner thought Genentech would be acquired by a major pharma company. It was just a question of when. He approached Johnson and Johnson and “floated the idea of a purchase price of $80 million. The offer fell flat. Fred Middleton [Genentech’s VP of finance], present at the negotiations, speculated that J&J didn’t have “a clue about what to do with this [recombinant DNA] technology – certainly didn’t know what it was worth. They couldn’t fit it in a Band-Aid mold”. J&J executives were unsure how to value Genentech, there being no standard for comparison or history of earnings.” [Page 140]

Perkins and Swanson made one more attempt to sell Genentech. Late in 1979, Perkins, Swanson, Kiley and Middleton boarded a plane for Indianapolis to meet with Eli Lilly’s CEO and others in top management. Perkins suggested a selling price of $100 million. Middleton’s view is that Lilly was hamstrung by a conservative “not invented here” mentality, an opinion supported by the drug firm’s reputation for relying primarily on internal research and only reluctantly on outside contracts. The company’s technology was too novel, too experimental, too unconventional for a conservative pharmaceutical industry to adopt whole-heartedly. [Page 141]

When Genentech successfully developed interferon, a new opportunity happened. Interferon had been discovered in 1957 and thought to prevent virus infection. In November 1978, Swanson signed a confidential letter of intent with Hoffmann – La Roche and a formal agreement in January 1980. They were also lucky: “Heyneker and a colleague attended a scientific meeting in which the speaker – to everyone’s astonishment given the field’s intense competitiveness – projected a slide of a partial sequence of fibroblast interferon. They telephoned the information to Goeddel, who instantly relay the sequence order to Crea. […] Crea started to construct the required probes. […] Goeddel constructed a “library” of thousands upon thousands of bacterial cells, seeking ones with interferon gene. Using the partial sequence Pestka retrieved, Goeddel cloned full-length DNA sequences for both fibroblast and leukocyte interferon. […] In June 1980, after filing patent protection, Genentech announced the production in collaboration with Roche.” [Page 145] Genentech could consider going public and after another fight between Perkins and Swanson, Genentech decided to do so. Perkins had seen that the year 1980 was perfect for financing biotech companies through a public offering but Swanson saw the challenges this would mean for a young company with nearly no revenue or product.

New role models

The 1980-81 period would see the creation of a fleet of entrepreneurial biology-based companies – Amgen, Chiron, Calgene, Molecular Genetics, Integrated Genetics, and firms of a lesser note – all inspired by Genentech’s example of a new organizational model for biological and pharmaceutical research. Before the IPO window closed in 1983, eleven biotech companies in addition to Genentech and Cetus, had gone public*. […] But not only institutions were transformed. Genentech’s IPO transformed Herb Boyer, the small-town guy of blue-collar origins, into molecular biology’s first industrial multimillionaire. For admiring scientists laboring at meager academic salaries in relative obscurity, he became a conspicuous inspiration for their own research might be reoriented and their reputation enhanced. If unassuming Herb – just a guy from Pittsburgh, as a colleague observed – could found a successful company with all the rewards and renown that entailed, why couldn’t they? [Page 161]

*: According to one source, the companies staging IPO were Genetic Systems, Ribi Immunochem, Genome Therapeutics, Centocor, Bio-Technology General, California Biotechnology, Immunex, Amgen, Biogen, Chiron, and Immunomedics. (Robbins-Roth, From Alchemy To Ipo: The Business Of Biotechnology)

Following these three posts, I might write a fourth one about academic licenses in the biotechnology if and when I find some time…

Elon Musk and the Secret Sauce of Entrepreneurship (according to Tim Urban)

A student of mine (thanks 🙂 ) just sent me a link to amazingly great blog articles about Elon Musk. I had never of heard of the author, Tim Urban, and his blog Wait But Why but I will certainly follow his work from now on.

Tim Urban has written four articles about “the world’s most remarkable living entrepreneur.”

Part 1: Elon Musk: The World’s Raddest Man.

Part 2: How Tesla Will Change the World.

Part 3: How (and Why) SpaceX Will Colonize Mars.

Part 4: The Chef and the Cook: Musk’s Secret Sauce.

These 4 posts represent hundreds of pages if you print them. No kidding. I’ve read part 4 and it was a real (positive) shock. Tim Urban explains Musk’s entrepreneurial strengths. I just give some extracts but if must read it all (if you find the time).

“I think generally people’s thinking process is too bound by convention or analogy to prior experiences. It’s rare that people try to think of something on a first principles basis. They’ll say, “We’ll do that because it’s always been done that way.” Or they’ll not do it because “Well, nobody’s ever done that, so it must not be good.” But that’s just a ridiculous way to think. You have to build up the reasoning from the ground up —“from the first principles” is the phrase that’s used in physics. You look at the fundamentals and construct your reasoning from that, and then you see if you have a conclusion that works or doesn’t work, and it may or may not be different from what people have done in the past.” […] Musk is an impressive chef for sure, but what makes him such an extreme standout isn’t that he’s impressive — it’s that most of us aren’t chefs at all. […] “When I was a little kid, I was really scared of the dark. But then I came to understand, dark just means the absence of photons in the visible wavelength—400 to 700 nanometers. Then I thought, well it’s really silly to be afraid of a lack of photons. Then I wasn’t afraid of the dark anymore after that.” That’s just a kid chef assessing the actual facts of a situation and deciding that his fear was misplaced. As an adult, Musk said this: “Sometimes people fear starting a company too much. Really, what’s the worst that could go wrong? You’re not gonna starve to death, you’re not gonna die of exposure—what’s the worst that could go wrong?” Same quote, right? […] That’s Elon Musk’s secret sauce. Which is why the real story here isn’t Musk. It’s us. […] People believe thinking outside the box takes intelligence and creativity, but it’s mostly about independence. When you simply ignore the box and build your reasoning from scratch, whether you’re brilliant or not, you end up with a unique conclusion—one that may or may not fall within the box.

Then Tim Urban quotes Steve Jobs from his famous speech at Stanford in 2005 (I think): “When you grow up, you tend to get told the world is the way it is and your life is just to live your life inside the world. Try not to bash into the walls too much. Try to have a nice family life, have fun, save a little money. That’s a very limited life. Life can be much broader once you discover one simple fact. And that is: Everything around you that you call life was made up by people that were no smarter than you. And you can change it, you can influence it, you can build your own things that other people can use. Once you learn that, you’ll never be the same again.”

And all this reminds me about an essay I mentioned in the conclusion of my book, an essay by Wilhelm Reich, the great psychoanalyst, who he wrote in 1945: “Listen, Little Man”. A small essay by the number of pages, a big one in the impact it creates. “I want to tell you something, Little Man; you lost the meaning of what is best inside yourself. You strangled it. You kill it wherever you find it inside others, inside your children, inside your wife, inside your husband, inside your father and inside your mother. You are little and you want to remain little.” The Little Man, it’s you, it’s me. The Little Man is afraid, he only dreams of normality; it is inside all of us. We hide under the umbrella of authority and do not see our freedom anymore. Nothing comes without effort, without risk, without failure sometimes. “You look for happiness, but you prefer security, even at the cost of your spinal cord, even at the cost of your life”.

Tim Urban is absolutely right and you need to read his piece about dogma and tribes. He made me think again of my readings of the great French philosopher Cynthia Fleury and how we need to balance the individuals and the groups and why democracy is a fragile jewel of societies…

PS: I totally forgot to mention a video that a colleague of mine (thanks to her now 🙂 ) mentioned a few days ago. BY one of these nice coincidences of life, it is precisely one of the arguments of Tim Urban about why some people are “cooks” (followers or incremental innovators) and others “chefs” (disruptive innovators). Enjoy!

Isaacson’s The Innovators (final thoughts) – is the future about thinking machines?

It is always very sad to end reading a great book, but Isaascon’s beautifully finishes his with Ada Lovelace considerations (during the 19th century!) about the role of computers. “Ada might also be justified in boasting that she was correct, at least thus far, in her more controversial contention that no computer, no matter how powerful would ever truly be a “thinking” machine. A century after she died, Alan Turing dubbed the “Lady Lovelace’s Objection” and tried to dismiss it by providing an operational definition of a thinking machine. […] But it’s now been more than sixty years, and the machines that attempt to fool people on the test are at best engaging in lame conversation tricks rather than actual thinking. Certainly none has cleared Ada’s higher bar of being able to “originate” any thoughts of its own. […] Artificial intelligence enthusiasts have long been promising, or threatening, that machines like HAL would soon merge and prove Ada wrong. Such was the promise of the 1956 conference at Dartmouth organized by John McCarthy and Marvin Minsky, where the field of artificial intelligence was launched. The conference concluded that a breakthrough was about twenty years away. It wasn’t.” [Page 468]

Ada, Countess of Lovelace, 1840

John von Neumann realized that the architecture of the human brain is fundamentally different. Digital computers deal in precise units, whereas the brain, to the extent we understand it, is also partly an analog system which deals with a continuum of possibilities, […] not just binary yes-no data but also answers such as “maybe” and “probably” and infinite other nuances, including occasional bafflement. Von Neumann suggested that the future of intelligent computing might require abandoning the purely digital approach and creating “mixed procedures”. [Page 469]

“Artifical Intelligence”

Discussion about artificial intelligence flared up a bit, at least in the popular press, after IBM’s Deep Blue, a chess-playing machine beat the world champion Garry Kasparov in 1997 and then Watson, its natural-language question-answering computer won at Jeopardy! But […] these were not breakthroughs of human-like artificial intelligence, as IBM’s CEO was first to admit. Deep Blue won by brute force. […To] one question about the “anatomical oddity” of the former Olympic gymnast George Eyer, Watson answered “What is a leg?” The correct answer was that Eyer was missing a leg. The problem was understanding “oddity”, explained David Ferruci, who ran the Watson project at IBM. “The computer wouldn’t know that a missing leg is odder than anything else.” […]

“Watson did not understand the questions, nor its answers, nor that some of its answers were right and some wrong, nor that it was playing a game, nor that it won – because it doesn’t understand anything, according to John Searle [a Berkeley philosophy professor]. “Computers today are brilliant idiots” John E. Kelly III, IBM’s director of research. “These recent achievements have, ironically, underscored the limitations of computer science and artificial intelligence.” Professor Tomaso Poggio, director of the Center of Brain, Minds, and Machines at MIT. “We do not yet understand how the brain gives rise to intelligence, nor do we know how to build machines that are as broadly intelligent as we are.” Ask Google “Can a crocodile play basketball?” and it will have no clue, even though a toddler could tell you, after a bit of giggling. [Pages 470-71] I tried the question on Google and guess what. It gave me the extract by Isaacson…

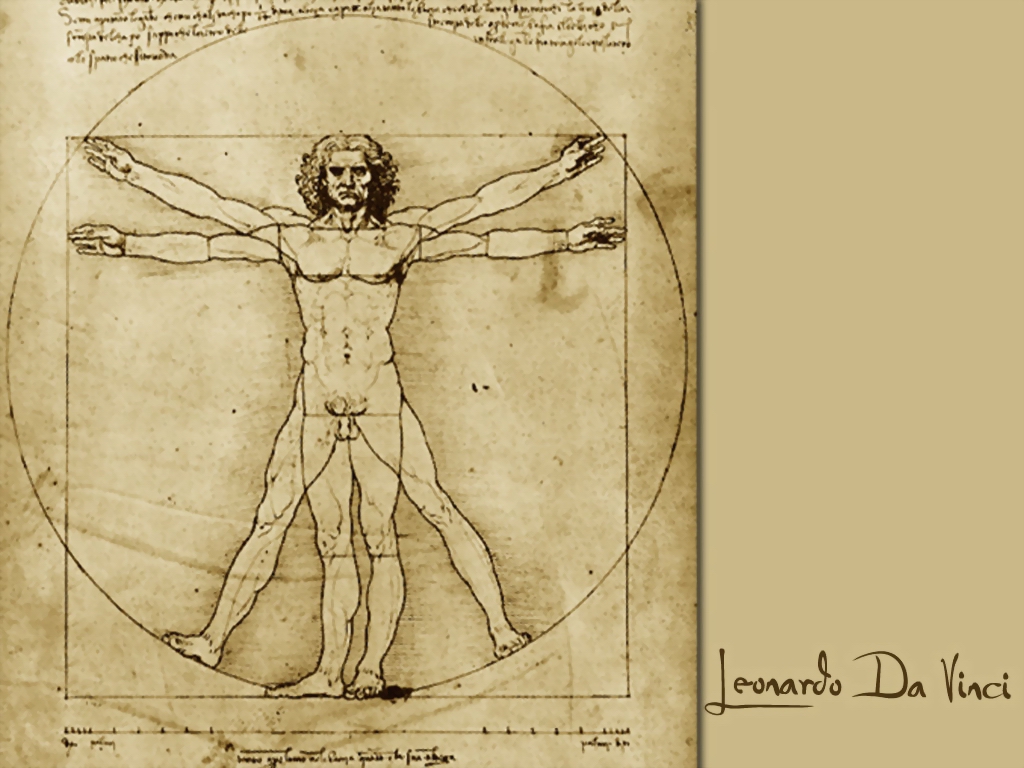

The human brain not only combines analog and digital processes, it also is a distributed system, like the Internet, rather than a centralized one. […] It took scientists forty years to map the neurological activity of the one-millimeter long roundworm, which has 302 neurons and 8,000 synapses. The human brain has 86 billion neurons and up to 150 trillion synapses. […] IBM and Qualcomm each disclosed plans to build “neuromorphic”, or brain-like, computer processors, and a European research consortium called the Human Brain project announced that it had built a neuromorphic microchip that incorporated “fifty million plastic synapses and 200,000 biologically realistic neuron models on a single 8-inch silicon wafer. […] These latest advances may even lead to the “Singularity” a term that von Neumann coined and the futurist Ray Kurzweil and the science fiction writer Vernor Vinge popularized, which is sometimes used to describe the moment when computers are not only smarter than humans but also can design themselves to be even supersmarter, and will thus no longer need us mortals. Isaacon is wiser than I am (as I feel that these ideas are stupid) when he adds: “We can leave the debate to the futurists. Indeed depending on your definition of consciousness, it may never happen. We can leave “that” debate to the philosophers and theologians. “Human ingenuity” wrote Leonardo da Vinci “will never devise any inventions more beautiful, nor more simple, nor more to the purpose than Nature does”. [Pages 472-74]

Computers as a Complement to Humans

Isaacson adds: “There is however another possibility, one that Ada Lovelace would like. Machines would not replace humans but would instead become their partners. What humans would bring is originality and creativity” [page 475]. After explaining that in a 2005 chess tournament, “the final winner was not a grandmaster nor a state-of-the-art computer, not even a combination of both, but two Americans amateurs who used three computers at the same time and knew how to manage the process of collaborating with their machines” (page 476) and that “in order to be useful, the IBM team realized [Watson] needed to interact [with humans] in a manner that made collaboration pleasant” (page 477) Isaacson further speculates:

Let us assume, for example, that a machine someday exhibits all of the mental capabilitie of a human: giving the outward appearance of recognizing patterns, perceiving emotions, appreciating beauty, creating art, having desires, forming moral values, and pursuing goals. Such a machine might be able to pass a Turing test. It might even pass we could call the Ada test, which is that it could appear to “originate” its own thoughts that go beyond what we humans program it to do. There would, however, be still another hurdle before we could say that artificial intelligence has triumphed over augmented intelligence. We call it the Licklider Test. It would go beyong asking whether a machine could replicate all the components of human intelligence to ask whether the machine accomplishes these tasks better when whirring away completely on its own or when working in conjunction with humans. In other words, is it possible that humans and machines working in partnership will be indefinitely more powerful than an artificial intelligence machine working alone? If so, then “man-computer symbiosis,” as Licklider called it, will remain triumphant. Artificial Intelligence need not be the holy grail of computing. The goal instead could be to find ways to olptimize the collaboration between human and machine capabilities – to forge a èartnership in which we let the machines do what they do best, and they let us do what we do best. [Pages 478-79]

Ada’s Poetical Science

At his last such apperance, for the iPad2 in 2011, Steve Jobs declared: “It’s in Apple’s DNA that technology alone is not enough – that it’s technology married with liberal arts, married with the humanities, that yields us the result that makes our heat sing”. The converse to this paean to the humanities, however, is also true. People who love the arts and humanities should endeavor to appreciate the beauties of math and physics, just as Ada did. Otherwise they will be left at the intersection of arts and science, where most digital-age creativity will occur. They will surrender control of that territory to the engineers. Many people who celebrate the arts and the humanities, who applaud vigorously the tributes to their importance in our schools, will proclaim without shame (and sometimes even joke) that they don’t understand math or physics. They extoll the virtues of learning Latin, but they are clueless about how to write an algorithm or tell BASIC from C++, Python from Pascal. They consider people who don’t know Hamlet from Macbeth to be Philistines, yet they might merrily admit that they don’t know the difference between a gene and a chromosome, or a transistor and a capacitor, or an integral and a differential equation. These concepts may seem difficult. Yes, but so, too, is Hamlet. And like Hamlet, each of these concepts is beautiful. Like an elegant mathematical equation, they are expressions of the glories of the universe. [Pages 486-87]

Issacson’s book last page presents Vinci’s Vitruvian Man, 1492

Halt and Catch Fire – the TV series about innovation (without Silicon Valley and start-ups)

I will always remember the day when one of my former bosses told me I should focus on (watching, making) videos rather than (reading, writing) books. I am a book person so I will probably not follow his advice ! Still from time to time I discover movies about High-tech innovation and entrepreneurship, start-ups.

Halt and Catch Fire is not precisely about start-ups, it is not a documentary, it is not a movie. It is a TV series that is certainly more serious (and less fun) than HBO’s Silicon Valley. It is an interesting accident that I began watching it while reading Isaacson’s the Innovators. Both talk about the early days of Personal Computers in a (rather) dramatic manner.

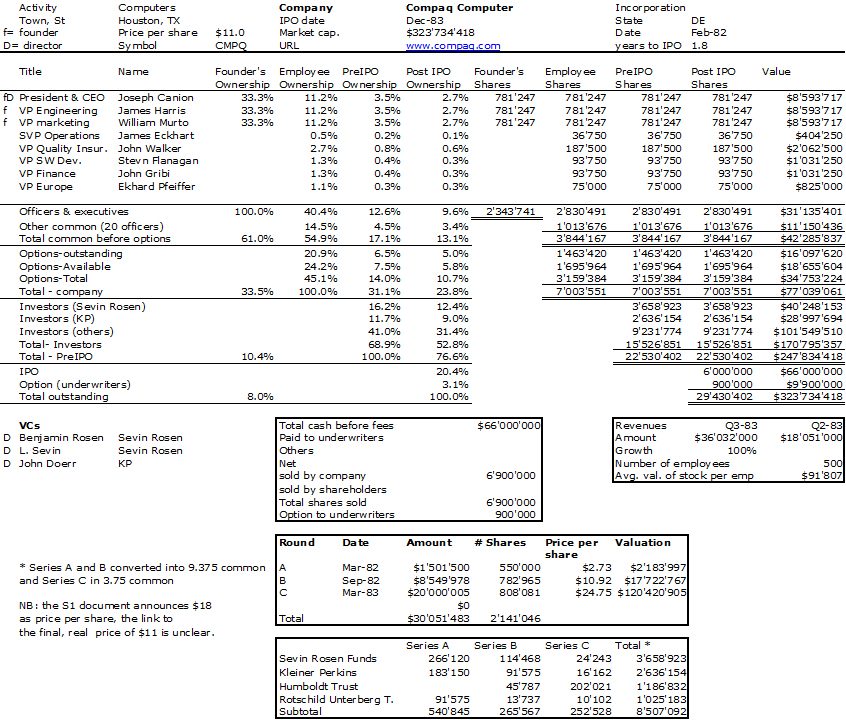

I am still in the beginning of Season 1 so my comments come as much from what I read as from what I saw! Halt and Catch Fire takes place in Texas (not in Silicon Valley), in an established company, Cardiff Electric (not a start-up) where three individuals who should probably have never met, a sales man, an engineer and a geek (not entrepreneurs) will try to prove to the world that they can change it. So why Texas? According to French Wikipedia: “Season 1 (which takes place in 1983-198) is inspired by the creation of Compaq launched in 1982 to develop the first IBM-compatible portable PC. Compaq engineers had to reverse engineer by disassembling the IBM BIOS to make a compatible version rewritten by people who had never seen the IBM BIOS in order not to violate copyrights.” (My Compaq cap. table below.)

Scoot McNairy as Gordon Clark, Mackenzie Davis as Cameron Howe and Lee Pace as Joe MacMillan – Halt and Catch Fire _ Season 1, Gallery – Photo Credit: James Minchin III/AMC

I should credit Marc Andreessen for helping me discovering this new AMC TV series. In a long portrait by the New Yorker, the Netscape founder mentions the series: “He pushed a button to unroll the wall screen, then called up Apple TV. We were going to watch the final two episodes of the first season of the AMC drama “Halt and Catch Fire,” about a fictional company called Cardiff, which enters the personal-computer wars of the early eighties. The show’s resonance for Andreessen was plain. In 1983, he said, “I was twelve, and I didn’t know anything about startups or venture capital, but I knew all the products.” He used the school library’s Radio Shack TRS-80 to build a calculator for math homework.” […] “The best scenes with Cameron were when she was alone in the basement, coding.” I said I felt that she was the least satisfactory character: underwritten, inconsistent, lacking in plausible motivation. He smiled and replied, “Because she’s the future.”

According to Wikipedia’s article about the series, “the show’s title refers to computer machine code instruction HCF, the execution of which would cause the computer’s central processing unit to stop working (“catch fire” was a humorous exaggeration).” It the series is not about entrepreneurship and start-ups so far, it is about rebellion, mutiny. There is a beautiful moment where one of the heroes convince his two colleagues to follow when they are about to stop. They are on quest.

I haven’t seen many movies and videos about my favorite topic so let me try and recapitulate:

– I began with Something Ventured, a documentary about the early days of Silicon Valley entrepreneurs and venture capitalists.

– The Startup Kids is another documentary about young (mostly) web entrepreneurs. Often very moving.

– HBO’s Silicon Valley is funnier than HFC but maybe not as good. Only time will say.

– I saw The Social Network which seems to remain the best fiction movie about all this, but

– I have not seen the two movies about Steve Jobs. It’s apparently not worth watching Jobs (2013) but I will probably try not to miss Steve Jobs (2015)

So as a conclusion, watch the trailer.

The Compaq Capitalization Table at IPO

Walter Isaacson’s The Innovators (part 4) – Steal… or Share?

How many times will I say how great a book is Walter Isaacson’s The Innovators – How a Group of Hackers, Geniuses, and Geeks Created the Digital Revolution ? And how many posts will I write about it ? Now this this the 4th part ! Isaacson shows how collaboration in software contributed to a unique value creation. This may mean sharing but also stealing !

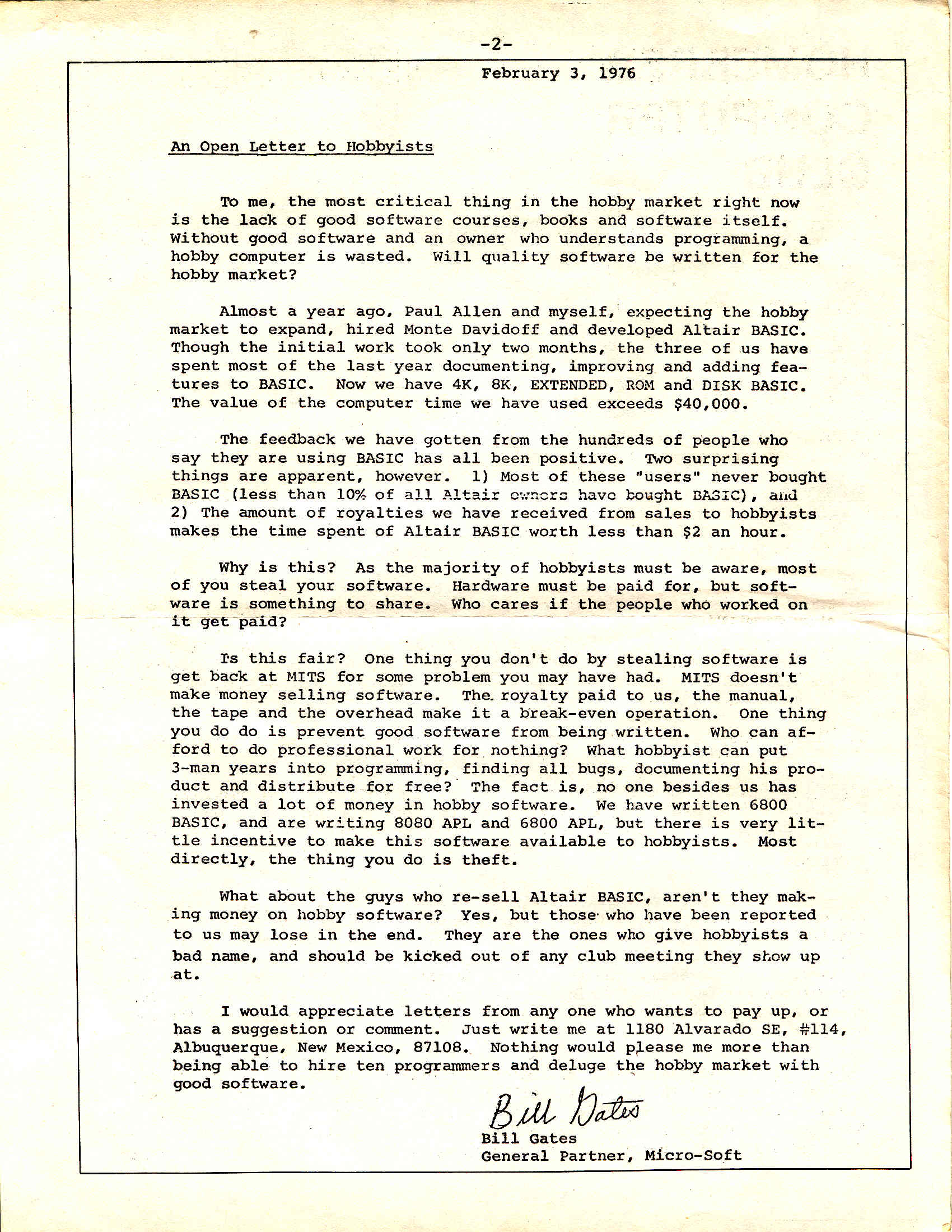

Gates complained to the members of the Homebrew Computer Club about this: “Two surprising things are apparent, however, 1) Most of these “users” never bought BASIC (less than 10% of all Altair owners have bought BASIC), and 2) The amount of royalties we have received from sales to hobbyists makes the time spent on Altair BASIC worth less than $2 an hour. Why is this? As the majority of hobbyists must be aware, most of you steal your software. Hardware must be paid for, but software is something to share. Who cares if the people who worked on it get paid? Is this fair? One thing you don’t do by stealing software is get back at MITS for some problem you may have had. MITS doesn’t make money selling software. […] The thing you do is theft. I would appreciate anyone who wants to pay.” [Page 342 and http://www.digibarn.com/collections/newsletters/homebrew/V2_01/gatesletter.html]

But Isaacson nuances : “Still there was a certain audacity to the letter. Gates was, after all, a serial stealer of computer time, and he had manipulated passwords to hack into accounts from eighth grade through his sophomore year at Harvard. Indeed, when he claimed in his letter that he and Allen had used more than $40,000 worth of computer time to make BASIC, he omitted the fact he had never actually paid for that time. […] Also, though Gates did not appreciate it at the time, the widespread pirating of Microsoft BASIC helped his fledgling company in the long run. By spending so fast, Microsoft BASIC became a standard, and other computer makers had to license it.” [Page 343]

And what about Jobs and Wozniak? Everyone knows about how phone phreaks had created a device that emitted just the right tone chirps to fool the Bell System and cadge free long-distance calls. […] “I have never designed a circuit I was prouder of. I still think it was incredible”. They tested it by calling the Vatican, with Wozniak pretending to be Henry Kissinger needing to speak to the pope, it took a while but the officials at the Vatican finally realized it was a prank before they woke up the pontiff. [Page 346]

Gates, Jobs and the GUI

And the greatest robbery may have been the GUI – Graphical User Interface. But who stole? Later when he was challenged about pilfering Xerox’s ideas, Jobs quoted Picasso: “Good artists copy, great artists steal. And we have been always shameless about stealing great ideas. They were copier-heads who had no clue about what a computer could do.” [Page 365]

However when Microsoft copied Apple for Windows, it was a different story… “In the early 1980s, before the introduction of the Macintosh, Microsoft had a good relationship with Apple. In fact on the day that IBM launched its PC in August 1981, Gates was visiting Jobs at Apple, which was a regular occurrence since Microsoft was making most of its revenue writing software for the Apple II. Gates was still the supplicant in the relationship. In 1981, Apple had $334 million in revenue, compared to Microsoft’s $15 million. […] Jobs had one major worry about Microsoft: he didn’t want it to copy the graphical user interface. […] His fear that Gates would steal the idea was somewhat ironic, since Jobs himself had filched the concept from Xerox.” [Pages 366-67]

Things would go worse… “Well, Steve, I think there’s more than one way of looking at it. I think it’s more like we both had this rich neighbor named Xerox and I broke into his house to steal the TV set and I found out that you had already stolen it”. [Page 368]

Stallman, Torvalds, free- and open-source

There would be other oppositions. The hacker corps that grew up around GNU [Stallman’s free software] and Linux [Torvalds’ open software] showed that emotional incentives, beyond financial rewards, can motivate voluntary collaboration. “Money is not the greatest of motivations,” Torvalds said. “Folks do their best work when they are driven by passion. When they are having fun. This is as true for playwrights and sculptors and entrepreneurs as it is for software engineers.” There is also, intended or not, some self-interest involved. “Hackers are also motivated, in large part, by the esteem they can gain in the eyes of their peers, improve their reputation, elevate their social status. Open source development gives programmers the chance.” Gates “Letter to Hobbysts”, complaining about the unauthorized sharing of Microsoft BASIC, asked in a chiding way, “who can afford to do professional work for nothing?”. Torvalds found that an odd outlook. He and Gates were from two very different cultures, the communist-tinged radical academia of Helsinki versus the corporate elite of Seattle. Gates may have ended up with the bigger house, but Torvalds reaped anti-establishment adulation. “Journalists seemed to love the fact that, while Gates lived a high-tech lakeside mansion, I was tripping over my daughter’s playthings in a three-bedroom ranch house with bad plumbing in boring Santa Clara,” he said with ironic self-awareness. “And that I drove a boring Pontiac. And answered my own phone. Who wouldn’t love me?” [Pages 378-79]

Which does not make open a friend of free. The disputes went beyond mere substance and became, in some ways, ideological. Stallman was possessed by a moral clarity and unyielding aura, and he lamented that “anyone encouraging idealism today faces a great obstacle: the prevailing ideology encourages people to dismiss idealism as ‘impractical'”. Torvalds, on the contrary, was unabashedly practical, like an engineer. “I led the pragmatists,” he said. “I have always thought that idealistic people are interesting, but kind of boring and scary.” “Torvalds admitted to “not exactly being a huge fan” of Stallman, explaining, “I don’t like single-issue people, nor do I think that people who turn the world into black and white are very nice or ultimately very useful. The fact is, there aren’t just two sides to any issue, there’s almost always a range of responses, and ‘it depends’ is almost always the right answer to any big question. He also believed it should be permissible to make money from open-source software. “Open-source is about letting everybody play. Why should business, which fuels so much of society’s technological advancement, be excluded?”. Software may want to be free, but the people who write it may want to feed their kids and reward their investors. [Page 380]

The Innovators by Walter Isaacson – part 3: (Silicon) Valley

Innovation is about business models – the Atari case

Innovation in (Silicon) Valley: after the chip, innovation saw the arrival of games, software and the Internet “As they were working on the first Computer Space consoles, Bushnell heard that he had competition. A Stanford grad named Bill Pitts and his buddy Hugh Tuck from California polytechnic had become addicted to Spacewar, and they decided to use a PDP-11 minicomputer to turn it into an arcade game. […] Bushnell was contemptuous of their plan to spend $20,000 on equipment, including a PDP-11 that would be in another room and connected by yards of cable to the console, and then charge ten cents a game. “I was surprised at how clueless they were about the business model,” he said. “Surprised and relieved. As soon as I saw what they were doing, I knew they’d be no competition”.

Galaxy Game by Pitts and Tuck debuted at Stanford’s Tresidder student union coffeehouse in the fall of 1971. Students gathered around each night like cultists in front of a shrine. But no matter how many lined up their coins to play, there was no way the machine could pay for itself, and the venture eventually folded. “Hugh and I were both engineers and we didn’t pay attention to business issues at all,” conceded Pitts. Innovation can be sparked by engineering talent, but it must be combined with business skills to set the world afire.

Bushnell was able to produce his game, Computer Space, for only $1,000. It made its debut a few weeks after Galaxy Game at the Dutch Goose bar in Menlo Park near Palo Alto and went on to sell a respectful 1,500 unites. Bushnell was the consummate entrepreneur: inventive, good at engineering, and savvy about business and consumer demand. He was also a great salesman. […] When he arrived back at Atari’s little rented office in Santa Clara, he described the game to Alcorn [Atari’s co-founder], sketched out some circuits, and asked him to build the arcade version of it. He told Acorn he had signed a contract with GE to make the game, which was untrue. Like many entrepreneurs, Bushnell had no shame about distorting reality in order to motivate people.” [Pages 209-211]

“Innovation requires having three things: a great idea, the engineering talent to execute it, and the business savvy (plus deal-making moxie) to turn it into a successful product. Nolan Bushnell scored a trifecta when he was twenty-nine, which is why he, rather than Bill Pitts, Hugh Truck, Bill Nutting, or Ralph Baer, goes down in history as the innovator who launched the video game industry.” [page 215]

You may also so listen to Bushnell directly. This is Something Ventured and the Atari story begins at 30’07” until 36’35” (you may go on Youtube directly for the right timing).

The debate about intelligence of machines

Chapter 7 is about the beginnings of the Internet. Isaacson adddresses a topic which has come back has a hot debate these days: will machines and the computer in particular replace humans, with or despite their intelligence, creativity and innovation capabilities? I feel close to Isaacson whom I quote from page 226: “Licklider sided with Norbert Wiener, whose theory of cybernetics was based on humans and machines working closely together, rather than with their MIT colleagues Marvin Minsky and John mcCarthy, whose quest for artificial intelligence involved creating machines that could learn on their own and replace human cognition. As Licklider explained, the sensible goal was to create an environment in which humans and machines “cooperate in making decisions.” In other words,they would augment each other. “Men will set the goals, formulate the hypotheses, determine the criteria, and perform the evaluations. Computing machines will do the routinizable work that must be done to prepare the way for insights and decisions in technical and scientific thinking.”

The Innovator’s dilemma

In the same chapter which tries to describe who were the inventors (more than the innovators) in the case of the Internet – J.C.R. Licklider, Bob Taylor, Larry Roberts, Paul Baran, Donald Davies, or even Leonard Kleinrock – and why it was invented – an unclear motivation between the military objective of protecting communications in case of a nuclear attack or the civilian one of helping researchers in sharing resources – Isaacson shows once again the challenge of convincing established players.

Baran then collided with one of the realities of innovation, which was that entrenched bureaucracies are resistant to change. […] He tried to convince AT&T to supplement its circuit-switched voice network with a packet-switched data network. “they fought it tooth and nail,” he recalled. “They tried all sorts of things to stop it.” [AT&T would go as far as organizing a series of seminars that would involve 94 speakers] “Now do you see why packet switching wouldn’t work?” Baran simply replied, “No”. Once again, AT&T was stymied by the innovator’s dilemma. It balked at considering a whole new type of data network because it was so invested in traditional circuits. [Pages 240-41]

[Davies] came up with a good old English word for them: packets. In trying to convince the general Post office to adopt the system, Davies ran into the same problem that Baran had knocking at the door of AT&T. But they both found a fan in Washington. Larry Roberts not only embraced their ideas; he also adopted the word packet.

The entrepreneur is a rebel (who loves power)

One hard-core hacker, Steve Dompier, told of going down to Alburquerque in person to pry loose a machine from MITS, which was having trouble fulfilling orders. By the time of the third Homebrew meeting in April 1975, he had made an amusing discovery. He had written a program to sort numbers, and while he was running it, he was listening to a weather broadcast on a low-frequency transistor radio. “The radio started going zip-zzziiip-ZZZIIIPP at different pitches », and Dompier said to himself, “Well, what do you know ! My first peripheral device!” So he experimented. “I tried some other programs to see what they sounded like, and after about eight hours of messing around, I had a program that could produce musical tones and actually make music”. [Page 310]

“Dompier published his musical program in the next issue of the People’s Computer Company, which led to a historically noteworthy response from a mystified reader. “Steven Dompier has an article about the musical program that he wrote for the Altair in the People’s Computer Company,” Bill Gates, a Harvard student on leave writing software for MITS in Albuquerque, wrote in the Altair newsletter. “The article gives a listing of his program and the musical data for ‘The Fool on the Hill’ and ‘Daisy.’ He doesn’t explain why it works and I don’t see why. Does anyone know?” the simple answer was that the computer , as it ran the programs, produced frequency interference that could be controlled by the timing loops and picked up as tone pulses by an AM radio.

By the time his query was published, Gates had been thrown into a more fundamental dispute with the Homebrew Computer Club. It became archetypal of the clash between the commercial ethic that believed in keeping information proprietary, represented by Gates [and Jobs], and the hacker ethic of sharing information freely, represented by the Homebrew crowd [and Wozniak].” [Page 311]

Isaacson, through his description of Gates and Jobs, explains what is an entrepreneur.

“Yes, Mom, I’m thinking,” he replied. “Have you ever tried thinking?” [P.314] Gates was a serial obsessor. […] he had a confrontational style [… and he] would escalate the insult to be “the stupidest thing I’ve ever heard.” [P.317] Gates pulled a power play that would define his future relationship with Allen. As Gates describes it, “That’s when I say ‘Okay, but I’m going to be in charge. And I’ll get used to being in charge, and it’ll be hard to deal with me from now on unless I’m in charge. If you put me in charge, I’m in charge of this and anything else we do.’ ” [P.323] Like many innovators, Gates was rebellious just for the hell of it. [P.331] “An innovator is probably a fanatic, somebody who loves what they do, works day and night, may ignore normal things to some degree and therefore be viewed as a bit imbalanced. […] Gates was also a rebel with little respect for authority, another trait of innovators. [P.338]

Allen assumed that his partnership with Gates would be fifty-fifty. […] but Gates had insisted on being in charge. “It’s not right for you to get half. […] I think it should be sixty-forty.” […] Worse yet, Gates insisted on revisiting the split two years later. “I deserve more than 60 percent.” His new demand was that the split be 64-36. Born with a risk-taking gene, Gates would cut loose late at night by driving at terrifying speeds up the mountain roads. “I decided it was his way of letting off steam.” Allen said. [P.339]

Gates arrested for speeding, 1977. [P.312]

“There is something indefinable in an entrepreneur, and I saw that in Steve,“ Bushnell recalled. “He was interested not just in engineering, but also in the business aspects. I taught him that if you act like you can do something, then it will work. I told him, pretend to be completely in control and people will assume that you are.” [P.348]

The concept of the entrepreneur as a rebel is not new. In 2004, Pitch Johnson, one of the earliest VC in Silicon Valley claimed “Entrepreneurs are the revolutionaries of our time.” Freeman Dyson has written “The Scientist as a Rebel“. And you should read Nicolas Colin’s analysis of entrepreneurial ecosystems: Capital + know-how + rebellion = entrepreneurial economy. Yes rebels who loves power…

The Innovators by Walter Isaacson – part 2 : Silicon (Valley)

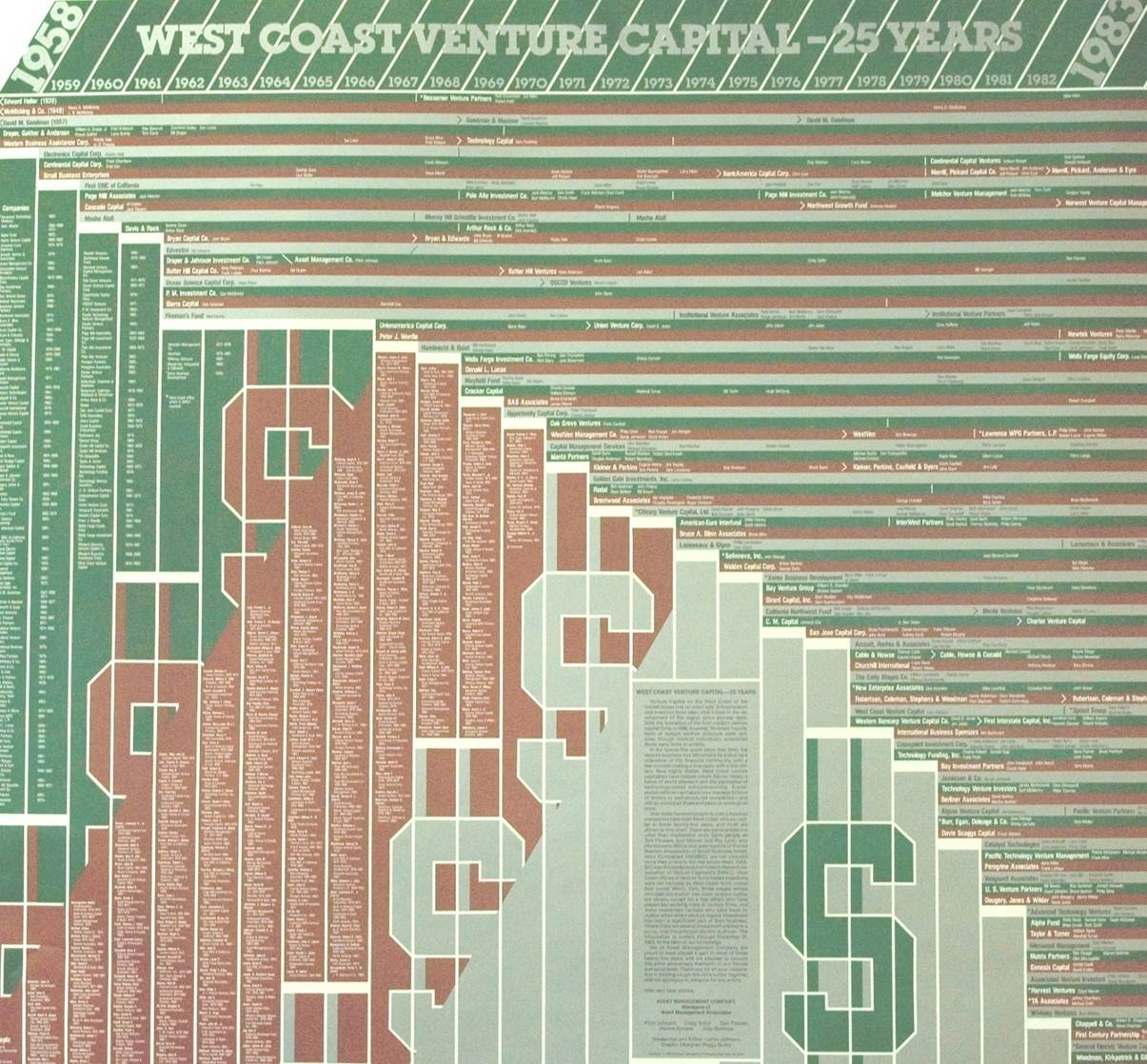

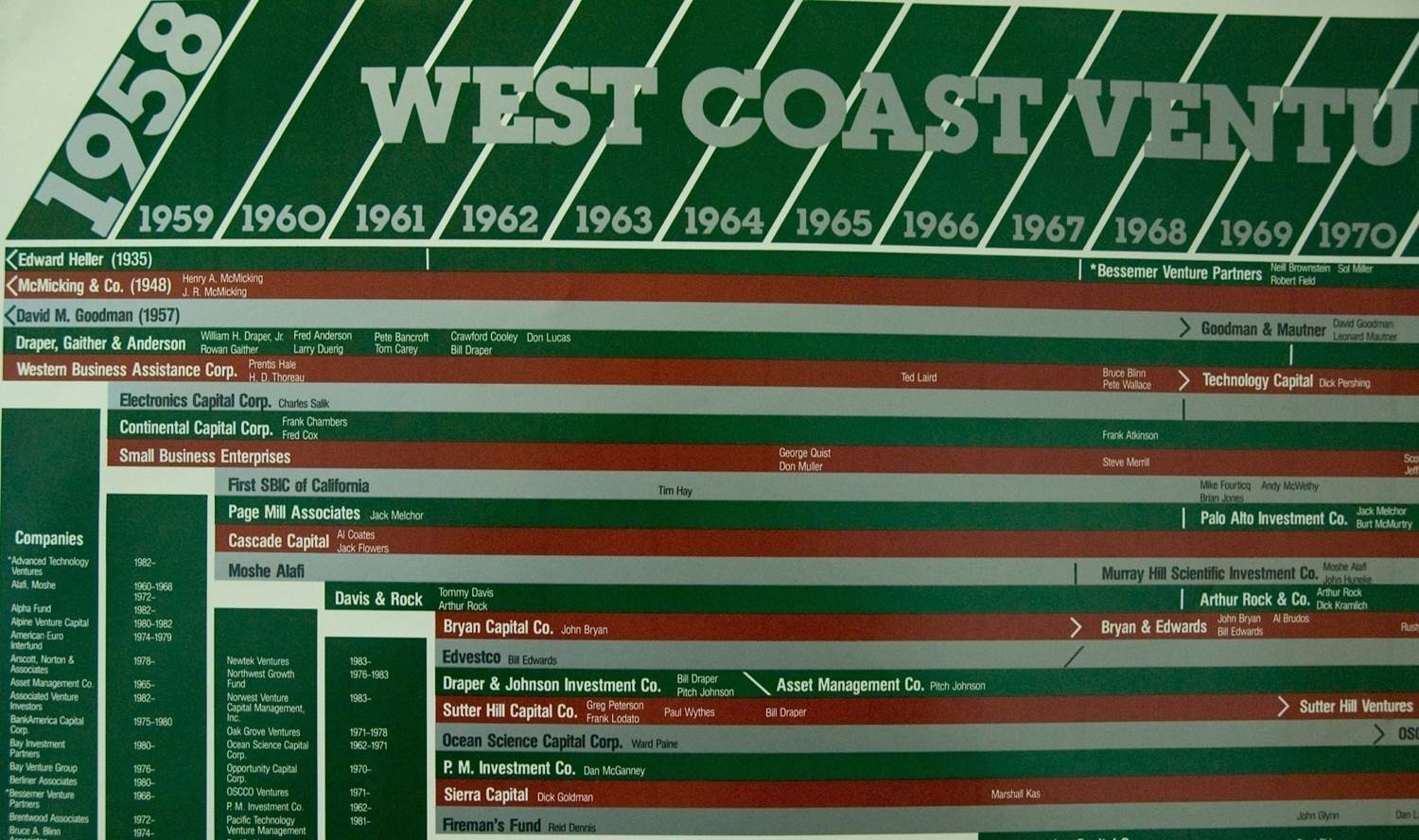

What I am reading now following my recent post The Complexity and Beauty of Innovation according to Walter Isaacson is probably much better known: Innovation in Silicon Valley at the time of Silicon – Fairchild, Intel and the other Fairchildren. I have my own archive, nice posters from those days, one about the start-up / entrepreneur genealogy, with a zoom on Fairchild and one on Intel and one about the investor genealogy

Entrepreneurs…

“There were internal problems in Palo Alto. Engineers began defecting, thus seeding the valley with what became known as Fairchildren: companies that sprouted from spores emanating from Fairchild.” [Page 184] “The valley’s main artery, a bustling highway named El Camino Real, was once the royal road that connected California’s twenty-one mission churches. By the early 1970s – thanks to Hewlett-Packard, Fred Terman’s Stanford Industrial Park, William Shockley, Fairchild and its Fairchildren – it connected a bustling corridor of tech companies. In 1971, the region got a new moniker. Don Hoefler, a columnist for the weekly trade paper Electronic News, began writing s series of columns entitled “Silicon Valley USA,” and the name stuck.” [Page 198]

… and Investors

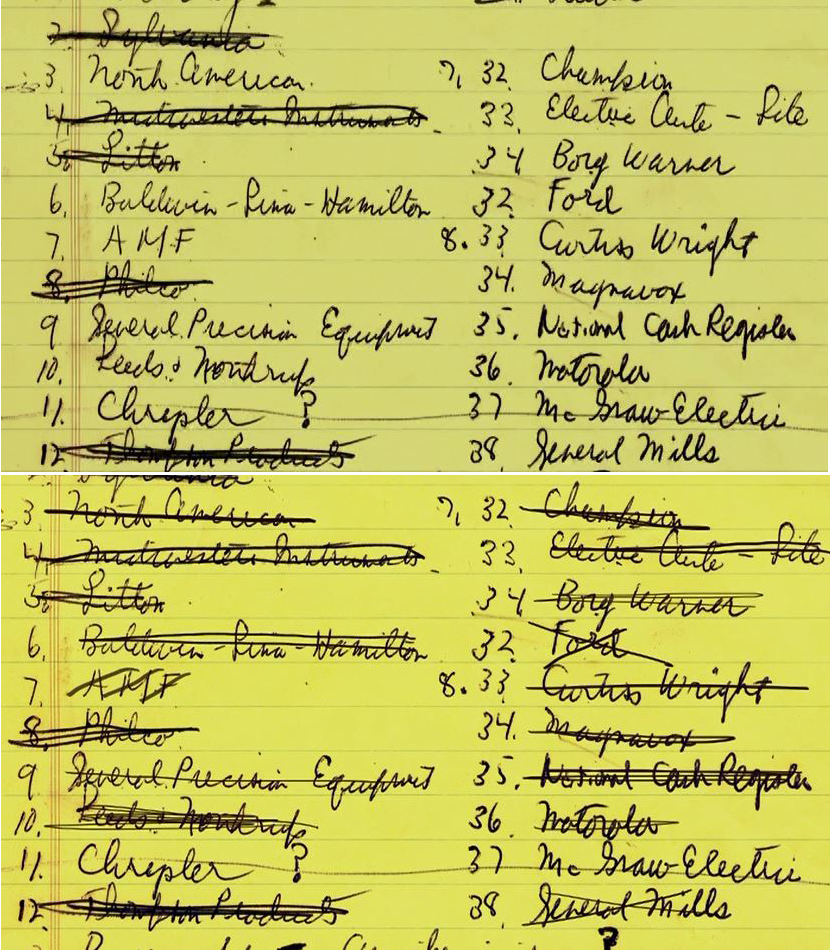

“In the eleven years since he had assembled the deal for the traitorous eight to form Fairchild Semiconductors, Arthur Rock had helped to build something that was destined to be almost as important to the digital age as the microchip: venture capital.” [Page 185] “When he had sought a home for the traitorous eight in 1957, he pulled out a single piece of legal-pad paper, wrote a numbered list of names, and methodically phoned each one, crossing off the names as he went down the list. Eleven years later, he took another sheet of paper and listed people who would be invited to invest and how many of the 500’000 shares available at $5 apiece he would offer to each. […] It took them less than two days to raise the money. […] All I had to tell people was that it was Noyce and Moore. They didn’t need to know much else.” [Pages 187-88]

The Intel culture

“There arose at Intel an innovation that had almost as much of an impact on the digital age as any [other]. It was the invention of a corporate culture and management style that was the antithesis of the hierarchical organization of East Coast companies.” [[Page 189] “The Intel culture, which would permeate the culture of Silicon Valley, was a product of all three men. [Noyce, Moore and Grove]. […] It was devoid of the trappings of hierarchy. There were no reserved parking places. Everyone including Noyce and Moore, worked in similar cubicles. […] “There were no privileges anywhere” recalled Ann Bowers, who was the personnel director and later married Noyce, [she would then become Steve Jobs’ first director of human resources] “we started a form of company culture that was completely different than anything that had been before. It was a culture of meritocracy.

It was also a culture of innovation. Noyce had a theory that he developed after bridling the rigid hierarchy at Philco. The more open and unstructured a workplace, he believed, the faster new ideas would be sparked, disseminated, refined and applied.” [Pages 192-193]

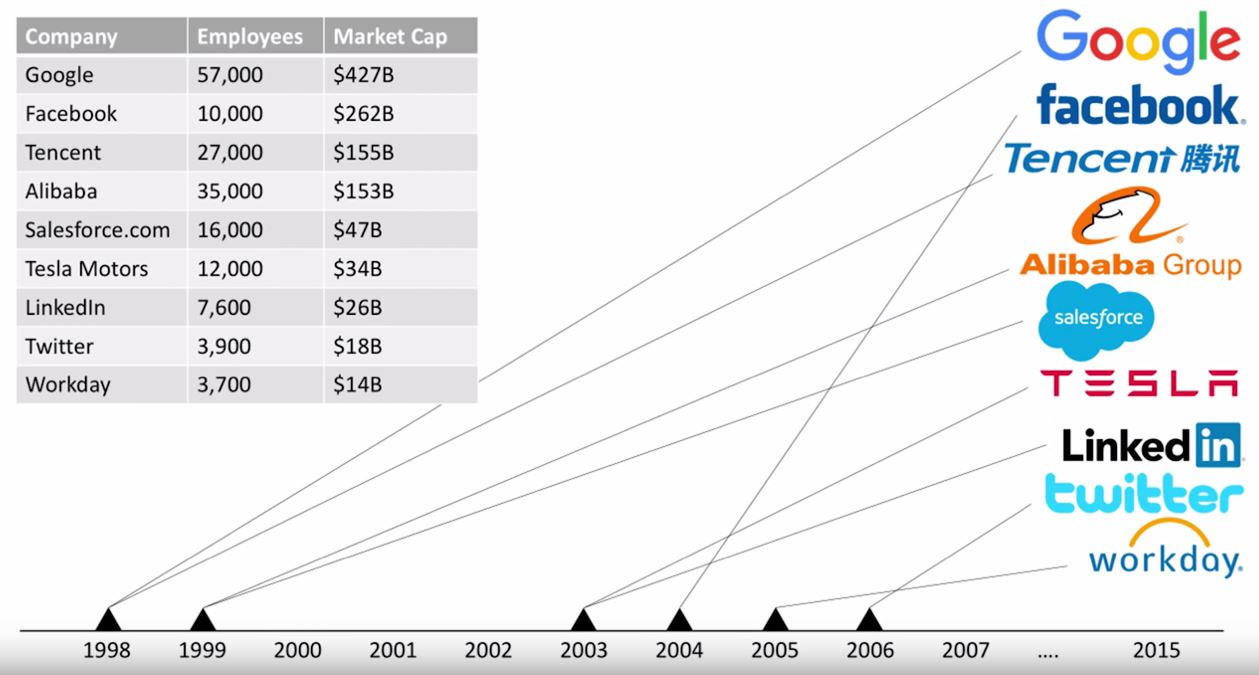

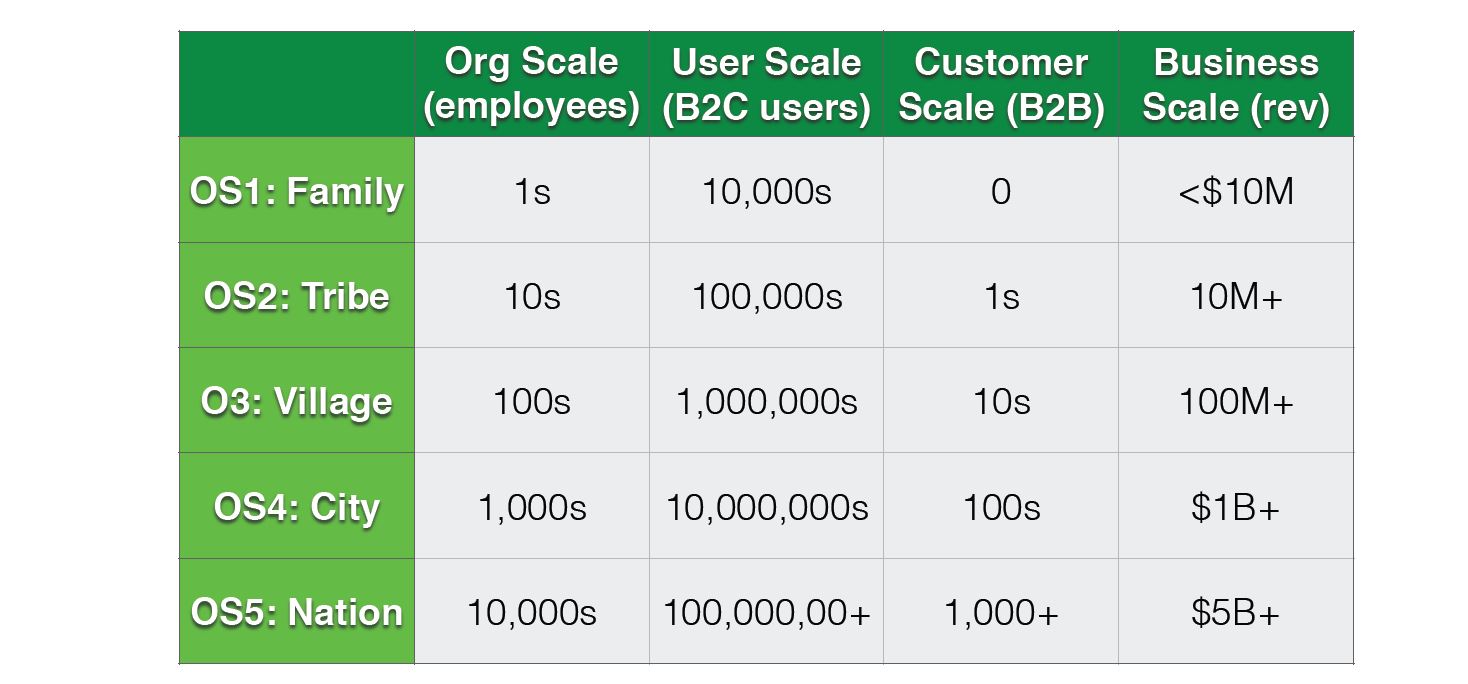

Reid Hoffmann about Silicon Valley success: it’s not startup, it’s scaleup

In his introductory article about the course he is giving at Stanford, Reid Hoffman convincingly explains why Silicon Valley still leads in high-tech innovation: Silicon Valley is no longer unique in its ability to launch startups. Today, many parts of the world are rich in all of the necessary ingredients. There are bright young technical graduates from universities around the world. Venture capital has gone global. And, technology companies have R&D centers in many areas of the world. There has even been a global expansion of some of the more subtle elements such as a culture acceptance of the potential failure of bold ventures. And, the belief in entrepreneurship is spreading everywhere in the world — creating a receptive culture in many cities. So, why does Silicon Valley continue to produce so many industry-transforming companies? The secret has moved past startups to scaleups.

The full article is CS183C: Technology-enabled Blitzscaling: The Visible Secret of Silicon Valley’s Success. I have watched the 1st class. Here it is with the slides:

And here are a few things I liked: